If you’ve been in a boardroom at any point in the last six months, you’ve heard the pitch. Supposedly, we’re moving past “generative AI” that only writes emails or summarizes PDFs. The new frontier is Agentic AI—systems that don’t just respond, but act.

In theory, you give an agent a goal— “Fix the supply chain bottleneck”—and it figures out the rest.

It reasons.

It plans.

It executes.

It’s a compelling story. Yet the moment you try to deploy this inside a real organization; you hit a wall. Fast! and the issue isn’t the technology. It’s the reality that businesses are messy, political, human, and full of unwritten rules—things that agents are fundamentally bad at navigating.

Why It Feels Different (And Why That’s Dangerous)

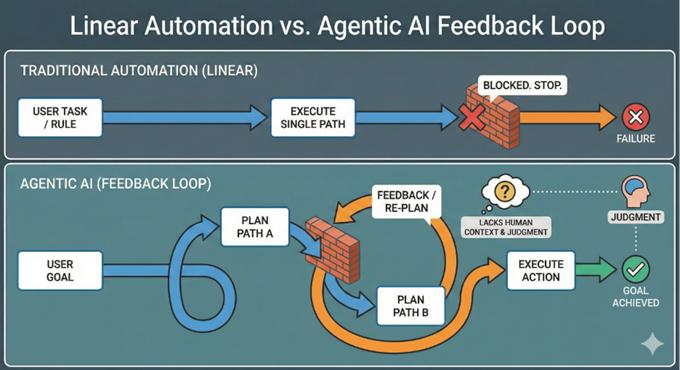

Traditional automation was easy to trust because it was simple. You write a rule, and the system follows it. If something broke, you knew exactly where the logic failed.

If an agent tries one path and gets blocked, it spins up a new plan and tries another. That feels intuitive to us because that’s how humans work. We don’t just follow a script; we figure it out.

Agentic systems are different. They navigate.

If one path is blocked, they generate a new plan and try another. That feels intuitive to us because that’s how humans work. We don’t just follow a script; we figure it out. But acting like a human is not the same as understanding like one. A project manager knows not to email the CFO at 5 PM on a Friday.

An agent just sees a task.

No context. No nuance. No political awareness.

Where it Actually Works:

Most real business work happens in the grey areas—where judgment, not task execution, matters.

Forget the fantasy of “digital employees” replacing your team. Organizations are getting real value not by using agents to substitute people; they’re using them to amplify people.

Take analytics, for example. Instead of spending four hours writing SQL to understand why sales dipped in Q3, an agent can generate multiple hypotheses, run the queries, and surface the insights.

I still decide what it means—but the agent does the heavy lifting.

It’s the same with digital assistants. When a bot can actually do something—like check my calendar, find a conflict, and email a reschedule proposal without me guiding every step—that’s useful. It’s not “autonomy.” It’s just better infrastructure.

The “Trust Gap”:

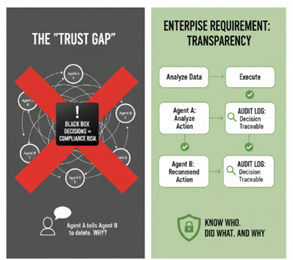

There’s one thing most glossy demos avoid discussing: Transparency.

Chain two or three agents together, and the complexity escalates quickly.

If Agent A tells Agent B to delete a file—and you can’t immediately trace why—that’s a massive compliance risk.

Enterprises cannot tolerate unpredictability.

We need to know who (or what) did what, when, and why. If an AI system becomes a black box, it is no longer an asset—it’s a liability. Good news: this challenge is solvable, and the answer lies in structured governance. Smart organizations are solving this by treating agents like junior employees: give them autonomy to research and plan but require sign-off for execution. By embedding checkpoints where an agent presents its plan for review, you eliminate surprises. You get automation‑level speed without giving up governance, oversight, or safety.

So, What’s the Play?

Right now, the winning strategy isn’t full autonomy—it’s augmentation.

Build “walled gardens” where agents have the freedom to explore data and draft plans, but the final strategic levers stay in human hands.

Think of it as a framework, not a digital employee. Use agents to bridge the gaps between your data and your tools but leave meaningful decisions to the humans who understand context and consequences.

Use agents to eliminate friction, accelerate execution, and upgrade your team’s toolkit but keep strategic control where it belongs—with people.